Last updated 2019-09-26T22:00:00Z

Feel free to correct or add more to this “how to” post

Hey Peeps

So something i noticed as of late is an certain amount of users complain about speeds etc on USB disks so i thought i make a little how to post

First of remember to use a good recommended charger and usb cable or the cable that comes with your device like the Vero and often recommended to have a self powered usb drive or a powered usb hub.

Choosing your filesystem

Many people not familiar with Linux will format the drive in Windows and then use it for OSMC just remember NTFS isn’t native to Linux so it will always perform slower then intended. Also if you have an I/O intensive programs (like torrents) running it will put additional stress on the CPU usage also NTFS does not understand Linux permissions due to the lack of permissions anyone can change those files without having to worry about passwords.

NTFS performance can be improved using the big_writes option to the mount.

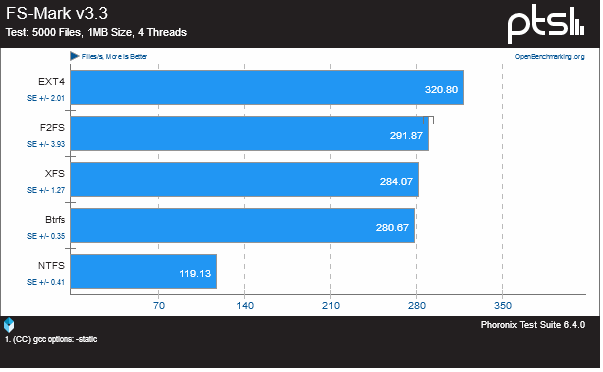

Here is is an chart the program used is FS-Mark and filesystem benchmark tool for more comprehensive charts (see link).

Here is a live test on a Vero device

| Filesystem | Time (secs) | SyS Time (Secs) | CPU (%) | Memory Buffer | |

| Time (secs) | SyS Time (Secs) | CPU (%) | |||

| System | User | ||||

| time sh -c “dd if=/dev/zero of=/mnt/ntfs_test/test.tmp bs=32k count=2000000 && sync” | 1844 | 284 | 66 | 40 | |

| time sh -c “dd if=/dev/zero of=/mnt/btrfs_test/test.tmp bs=32k count=2000000 && sync” | 1609 | 137 | 16 | 0.1 | |

| time sh -c “dd if=/dev/zero of=/mnt/ext2_test/test.tmp bs=32k count=2000000 && sync” | 1640 | 303 | 7.4 | 0.1 | |

| time sh -c “dd if=/dev/zero of=/mnt/ext4_test/test.tmp bs=32k count=2000000 && sync” | 1596 | 299 | 9.6 | 0.2 | |

| time sh -c “dd if=/dev/zero of=/mnt/exfat_test/test.tmp bs=32k count=2000000 && sync” | 1615 | 156 | 5.4 | 0.2 | |

| time sh -c “dd if=/dev/zero of=/mnt/ntfs_test/test.tmp bs=4k count=10000000 && sync” | 1497 | 241 | 50 | 34 | |

| time sh -c “dd if=/dev/zero of=/mnt/btrfs_test/test.tmp bs=4k count=10000000 && sync” | 1010 | 126 | 14 | 0.1 | |

| time sh -c “dd if=/dev/zero of=/mnt/ext2_test/test.tmp bs=4k count=10000000 && sync” | 1050 | 206 | 5 | 0.2 | |

| time sh -c “dd if=/dev/zero of=/mnt/ext4_test/test.tmp bs=4k count=10000000 && sync” | 1002 | 192 | 11 | 0.1 | |

| time sh -c “dd if=/dev/zero of=/mnt/exfat_test/test.tmp bs=4k count=10000000 && sync” | 1016 | 151 | 8.4 | 0.6 | |

| time sh -c “dd if=/mnt/ntfs_test/test.mkv of=/dev/null bs=4k && sync” (44G File) | 1195 | 146 | 49 | 4 | |

| time sh -c “dd if=/mnt/btrfs_test/test.mkv of=/dev/null bs=4k && sync” (44G File) | 1091 | 182 | 42 | 1 | |

| time sh -c “dd if=/mnt/ext2_test/test.mkv of=/dev/null bs=4k && sync” (44G File) | 1152 | 256 | 30 | 1.6 | |

| time sh -c “dd if=/mnt/ext4_test/test.mkv of=/dev/null bs=4k && sync” (44G File) | 1126 | 175 | 38 | 1.4 | |

| time sh -c “dd if=/mnt/exfat_test/test.mkv of=/dev/null bs=4k && sync” (44G File) | 1176 | 130 | 36 | 2.2 | |

| time sh -c "dd if=/mnt/ntfs_test/test.mkv of=/dev/null bs=32k && sync (44G File) | 1182 | 115 | 38 | 2.5 | |

| time sh -c “dd if=/mnt/btrfs_test/test.mkv of=/dev/null bs=32k && sync” (44G File) | 1083 | 187 | 30 | 0.5 | |

| time sh -c “dd if=/mnt/ext2_test/test.mkv of=/dev/null bs=32k && sync” (44G File) | 1161 | 245 | 28 | 0.6 | |

| time sh -c “dd if=/mnt/ext4_test/test.mkv of=/dev/null bs=32k && sync” (44G File) | 1150 | 229 | 36 | 0.5 | |

| time sh -c “dd if=/mnt/exfat_test/test.mkv of=/dev/null bs=32k && sync” (44G File) | 1201 | 142 | 47 | 2.2 | |

| Samba 44G file to NTFS | 1675 | 76 | 66 | 39 | |

| Samba 44G file to BTRFS | 1607 | 36 | 43 | 1 | |

| Samba 44G file to EXT2 | 1226 | 26 | 60 | 1 | |

| Samba 44G file to EXT4 | 1237 | 27 | 55 | 1 | |

| Samba 44G file to EXFAT | 2424 | 78 | 32 | 2 |

So lets run down the benefits of each filesystem available for OSMC

exfat

Well not native again to Linux but Microsoft made this to bridge the gap for Linux to it has more support then NTFS (IMHO) but is still has technical issues the thing is you might need some tools to do additional stuff on Linux like exfat-fuse exfat-utils

So how does it handle somewhat ok its basically fat64 and you can use the drive on both windows and Linux without issues as in terms of speed however there are plenty of reasons not to use this as a filesystem (see link) it does suffer from issues regarding transfer speeds when it comes to Samba.

exFAT is for SD media, not spinning disks or SSDs. It was created for 2 purposes.

- Get a new patent, so MSFT could demand payment from every SD user and device that uses SD cards of any sort. Video cameras, PnS cameras, DSLRs, MP3 players, Phones, … that’s billions of devices all providing a small payment for every device.

- Better support 32G and larger storage devices. It is possible to use FAT32 with larger storage devices, it just becomes extremely inefficient with allocations. MSFT changed the FAT32 code to prevent formatting partitions over 32GB with it, but it wasn’t always that way and Linux will format larger partitions, if you ask it.

ext4

This is the native filesystem for OSMC and the most recommended one since it works “out of the box” this filesystem can work under Windows with additional drivers such as Paragon Software suite (See link)

its less prone for corruption unless running with a bad charger or cable ![]() defragmentation is a non issue since the kernel has good management skills

defragmentation is a non issue since the kernel has good management skills

btrfs

The hidden contender and my personal favorite for this file system you need to install some additional tools btrfs-tools btrfs-progs this filesystem has many unique features such as auto-defrag, scrubbing, balancing metadata etc. Its not recommended for newbies but for those that love to experiment hell give it a go i promise that you will have fun ![]()

and again there are drivers for this under Windows such as Win-BTRFS (See link) and Paragon Software Suite (See link)

The cons of btrfs is that it can be a little bit heavier (2-5%) for lowend machines but it does pay off with features for the filesystem instead. BTRFS lies about storage. The du and df commands on a BTRFS cannot be trusted. The Copy-on-Write, CoW, nature of BTRFS has positives and negatives.

But how do i add more stuff to my HDD if i cant plug it into my computer

Well im glad you read this far, so first off go into the OSMC Store and download Samba this will share your drive on the network we are after all living in 2019 why move around a USB drive back and forth

just share that drive and from windows explorer type \\osmc (name may vary) to get to the shared drive to dump your stuff.